Georgia AI: Building On-Demand Sales Coaching That Works

How we built Georgia's AI role-play engine for real-time sales coaching, what broke first, and what early users actually said.

The Problem With Sales Coaching Is Timing

Sales coaching is expensive. A decent external coach runs $300 to $600 an hour. Most companies budget for two formal sessions a year, maybe four if the quarter is rough. That math does not match how selling actually works.

A rep gets feedback in January. The patterns that cost them three deals in March never get addressed. By the time the next coaching session arrives, the context is gone and they are reviewing a different set of mistakes.

The knowledge exists. The willingness to improve exists. The timing is completely broken.

Georgia was built to fix the timing problem. Not to replace human coaches, but to make practice available at 2am the night before a call that matters.

What Georgia Actually Does

Georgia is a role-play SaaS platform where salespeople practice live conversations against AI buyers. Not static scripts. Not quiz-style flashcards. Actual back-and-forth dialogue where the AI buyer has a persona, a budget concern, a competitor they already use, and a specific emotional temperature.

A rep chooses a scenario: enterprise software demo, pricing negotiation, cold outreach follow-up, objection handling after a ghost. The AI buyer responds based on that context and adapts based on what the rep says. Stall with filler words and the buyer gets impatient. Push price too hard and they go cold. Nail the value framing and the conversation opens up.

After each session, Georgia scores the call. Not with a vague 7 out of 10. With specific feedback: where momentum dropped, which objections were dodged instead of addressed, how many filler phrases appeared in the first 90 seconds.

The rep can replay the session, try a different approach, and run it again before breakfast.

The Hard Part: Making the AI Buyer Feel Real

This is where most teams underestimate the build. Getting an AI to talk back is not the challenge. Getting it to talk back in a way that actually trains the rep is.

Our first version failed quickly. The AI buyer was too agreeable. Reps would practice their pitch, the buyer would respond with polite interest, and the session ended feeling productive. Then they got on a real call and the actual buyer interrupted them in the second sentence with a price objection they had never practiced handling.

We rebuilt the persona engine around three things.

First, persona memory. The AI buyer needed to remember what was said earlier in the conversation and hold the rep accountable to it. If a rep promised ROI in 90 days in minute two and then contradicted that claim in minute six, the buyer needed to notice.

Second, objection escalation. Real objections do not appear once and disappear when answered. They come back harder if the answer was weak. We engineered an escalation layer so that a deflected objection would return with more specificity two exchanges later.

Third, tone modulation. Buyers do not stay at the same emotional state throughout a call. We built in trigger points where the AI buyer shifts from curious to skeptical, from skeptical to checked out, and occasionally from resistant to genuinely interested if the rep earns it. Reps had to learn to read and respond to those shifts in real time.

It took three rebuild cycles before early users stopped describing the experience as talking to a chatbot.

What the First Users Actually Said

The initial feedback cohort included sales reps at two SaaS companies and one professional services firm. We expected them to use Georgia for pre-call prep. Most of them did. But the pattern that surprised us was post-call debriefing.

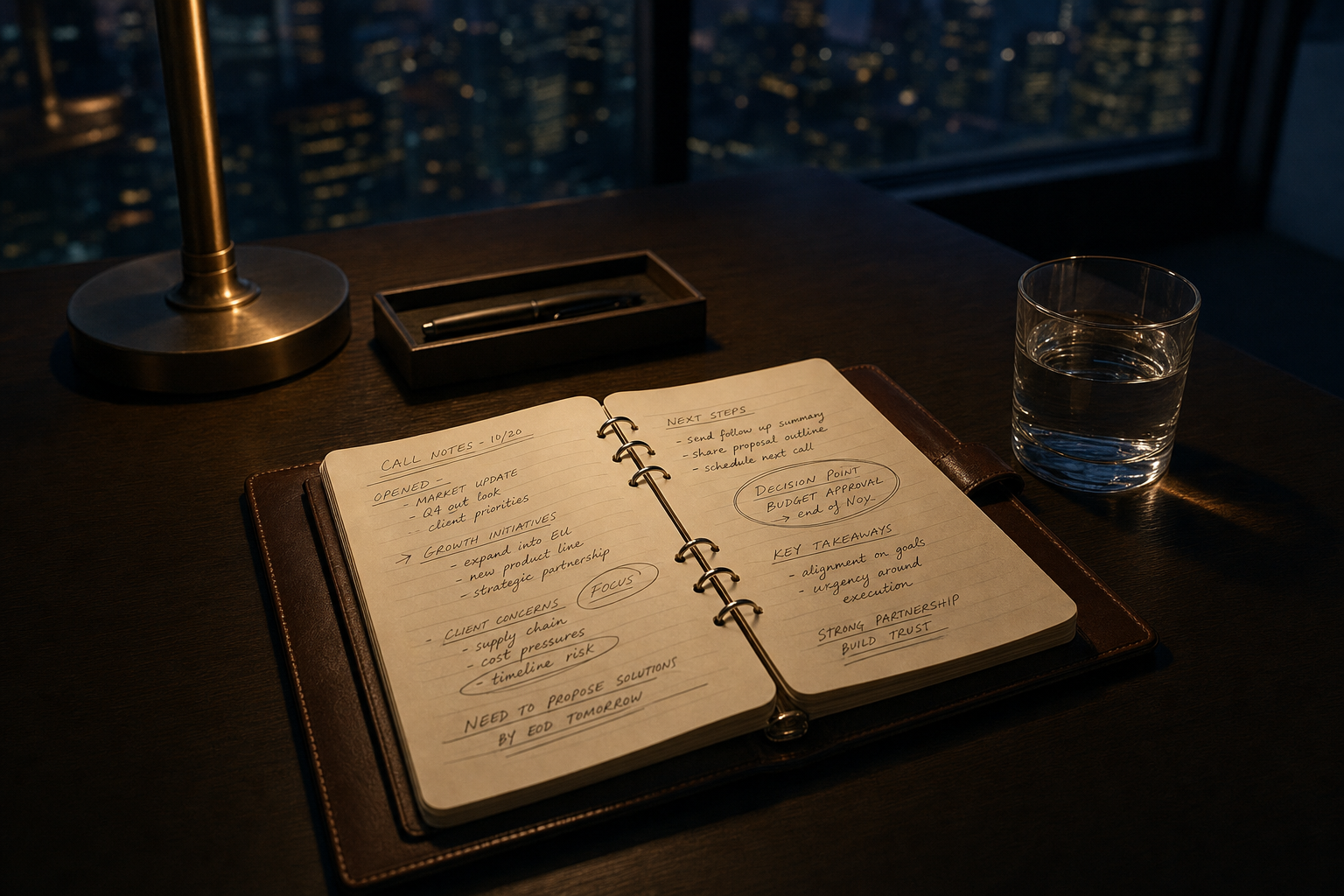

Reps would finish a real call that went wrong, come back to Georgia within hours, and reconstruct the scenario to figure out where they lost it. They were not just preparing. They were processing.

One rep described losing a deal because a procurement question caught him off guard. He came back to Georgia, built a scenario with that exact procurement angle, and ran it six times until the answer felt automatic. He said it was the first time he had ever actually fixed a mistake instead of just feeling bad about it.

That told us something important. The gap in sales training is not just frequency. It is the inability to act on a mistake immediately, while the memory is still sharp and the motivation is still high. Georgia closes that window.

Some friction points came through clearly. Reps wanted more industry-specific buyer personas. A SaaS buyer and a manufacturing operations director need different vocabularies and different emotional profiles. We are expanding the persona library. They also wanted session history they could share with managers, which is now in the product roadmap as a team coaching view.

A Practical Takeaway

If you are building any kind of AI training or simulation product, the first question to answer is not what the AI says. It is what happens when the user gives a weak response. Does the simulation let them off the hook, or does it hold pressure the way a real situation would?

The answer to that question determines whether your product builds real skill or just builds confidence. Those are not the same thing.

Georgia is still being refined. The early results are strong enough that we are expanding the scenario library and moving toward team-level analytics. But the core insight from building it holds: realistic simulation requires the AI to fail the user when they deserve to fail. Getting that right is most of the work.